Hi! It feels like it’s been awhile. I’m trying to balance priorities and reprioritize the deep-work needed to explore the latest AI tech for y’all (and me). Thank you for bearing with me.

A few weeks ago I tested recycling UGC videos for new demographics but there were smears. The videos were less than 3 seconds long too.

It’s fine for a visual hook to put in front of your video ad to capture attention.

How about if you wanted to recycle a longer UGC video?

I wanted to explore the latest tech to see how ‘perfect’ it can be for this post and show you the results.

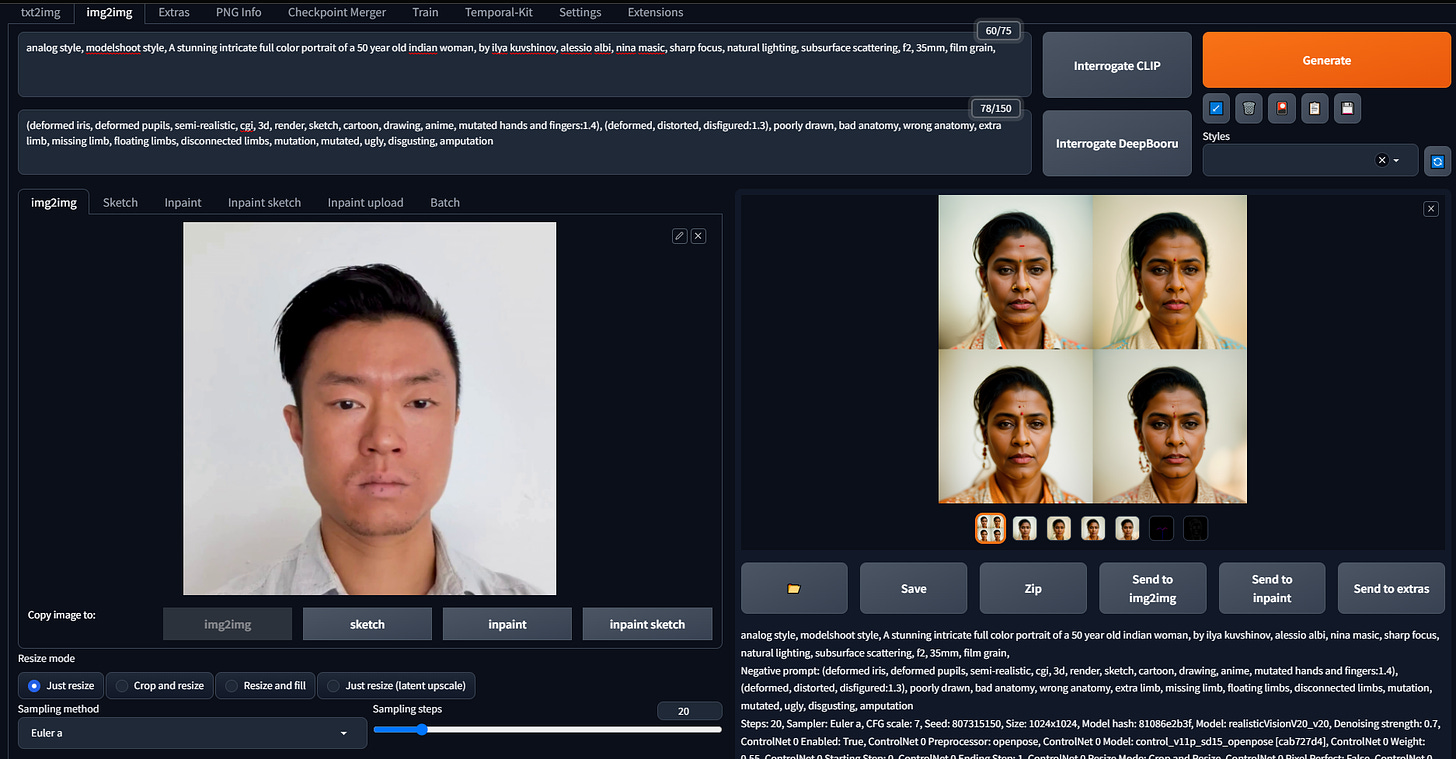

For the workflow I’m using Automatic1111, batch img2img, ControlNet, and TemporalKit. The workflow right now is pretty wonky. I encounter bugs and poor visual results over 50% of the time. But when it works, it’s good. I just suck at it right now.

There are 2 more Github repo’s I want to test that do similar things to TemporalKit: mov2mov and SD-CN-Animation.

Visual Examples

The video above shows the original, V1 result, and V2 result. While generating V1, I made a mistake somewhere. I decided to include it anyway.

V2 ran without a hitch. V2’s text prompt had ‘stone skin’ so that’s why his skin texture looks weird. He’s not a huamn!

If I want to improve the look there are some de-noising and CFG scale settings to play around with.

I mainly wanted to see how it would look when the subject is moving around quickly and there’s motion blur.

(By the way, Substack finally allows us to embed videos! I was linking to a YouTube and uploading sh*tty quality gifs.)

If you want to see what it looks like behind the scenes. Here’s one step in how the sausage is made:

Takeaways for growth marketers

This ‘AI filter’ process should sit before elements like text overlay or logos are applied. It should come right after you get the raw assets from the content creator.

After the filter is applied your team can go ahead and apply text overlays, effects, animations, etc.

Your filter doesn’t have to be human.

Hope this sparked some lightbulbs.

Manson