Parabolica #16 - I tested AutoGPT so you don't have to

Autonomous AI, vector embeddings, and cosine similarity

It’s been a while since I’ve been this excited about a Generative AI topic. A while means a few weeks with this rate of advancement.

My world view continuously shattered learning that there’s something 10x more powerful that existed compared to last month. Are you having fun?

We are all figuring out this new technology together that has no documentation.

Auto-recursive AI is making waves and is the next big thing after embeddings with chatbots.

Autonomous AI

I tested AutoGPT which seems like the most well-known open-source project for Autonomous AI. There are others like:

babyagi (baby AGI)

Microsoft Jarvis

EVAL

llm_agents

How AutoGPT works is you give it 5 goals and it will figure how to accomplish those goals by itself. You can watch it come up with its reasoning and also criticize its reasonings. It has access to commands like:

Google search

Browse website

Start GPT agent

Delete GPT agent

Write to file

Evaluate code

Get Improved code

Execute Python file

Strengths

Can spin up other AI sub agents to help (it spun up writer_agent to help create LinkedIn posts)

Can search Google to get instructions on what to do and what not to do

Weaknesses

Task prioritization and planning

Memory (and why vector embeddings are so important)

Gets stuck in a loop

My experience with AutoGPT

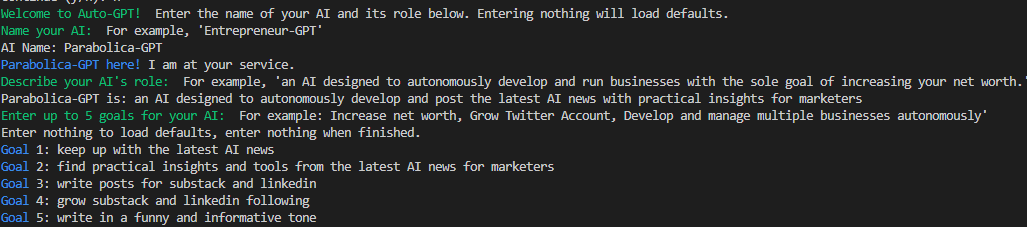

Here I give it 5 goals:

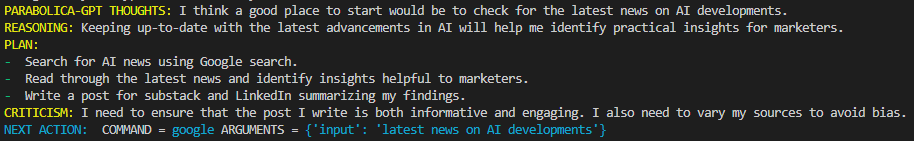

I let it run and here's the first interaction. Note its thoughts, reasoning, plan, and criticism:

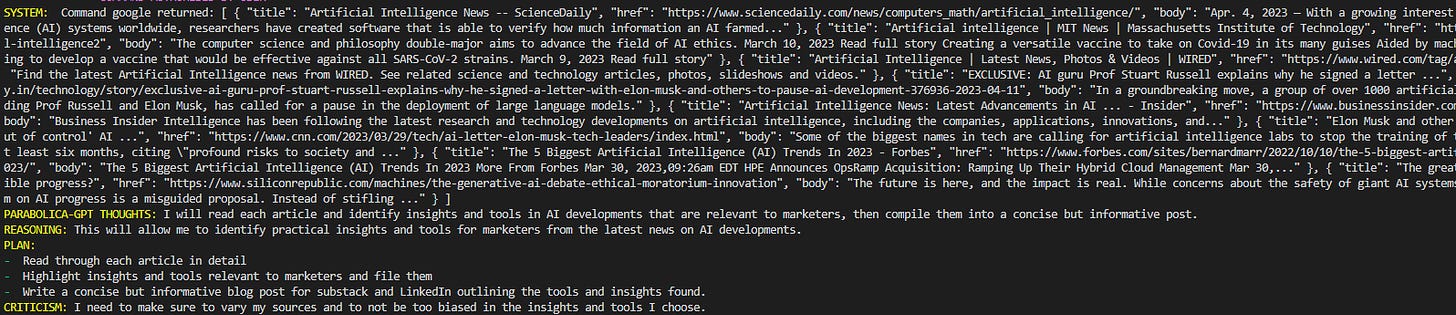

Here it returns a Google search. Its plan is to read through the article in detail, highlight insights, and write a concise but informative blog post:

Gif showing you its autonomous nature:

I let it run for 10 minutes and:

it searched Google for answers

it browsed websites

it spun up a ‘headlineWriter_bot’ with a task to ‘Develop compelling headlines’

it spun up an ‘articleSummarizer_bot’ with a task to ‘Please summarize the key insights of the article on 'Practical AI Applications in Marketing’

it came up with a post!

then came up on some errors :(

Vector embeddings

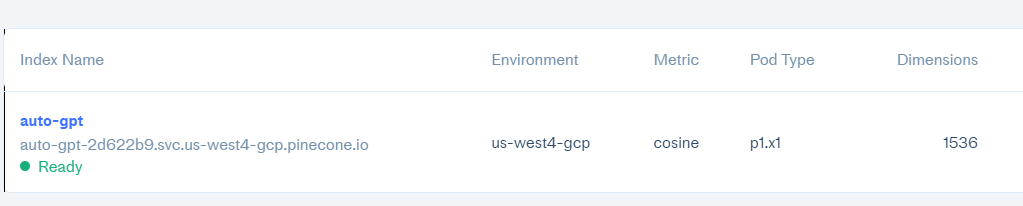

Vector embeddings erases one of the weaknesses of the autonomous AI agents. They act as an external memory bank for AI. Vectors represent content like text or images in high-dimensional space. We live in 3-dimensional space. Auto-GPT creates an index in 1,536 dimensions. Content that are similar to each other have shorter distances between these points.

Pinecone, the leader in this space, was just valued at $700M (What do valuations even mean in this economy? I digress.)

Cosine Similarity

How do vector embeddings work? Cosine similarity measures the distance between two vectors. The result is a value between -1 and 1:

-1 means they are opposite

1 means they are identical

For example, here are the vectors for the posts I embedded with OpenAI in my last post:

Takeaways for marketing

To me and many others, this is an early sign for AGI. I only tested this with GPT3.5 Turbo and not GPT4 yet. (I’m still on GPT4 API waitlist, anyone wanna let me borrow their API key?)

Watching it Google Search, come up with a plan, criticize itself, and reflect on past decisions scared the shid out of me. Scary stuff if(when?) the AI manages to get loose in a continuous loop.

For performance marketing, I can imagine spinning up agents with goals like (it can already generate, QA, and run code so hook it up to the right APIs):

creativeStrategy_bot

analyze parsed ad name data for performant creative elements (value prop, concept, hook, etc)

follow naming conventions

provide reasoning on why x iterations are good ideas

notify humans before executing ideas

generate x iterations

mediaBuying_bot

hit CPA, LTV/CAC, ROAS goal based on attributed or MMM data in x timeframe

change bid caps or cost caps accordingly

move budgets around channels, campaigns, and ad sets accordingly

notify humans of proposed budget or bid changes and reasoning before executing

analyst_bot

offer periodic performance reports to inform humans and first two bots

leverage learnings from past performance to improve marketing goals

monitor current performance and alert humans of any traffic anomalies

monitor review sentiment and proactively notify humans of potential negative trends

I believe this is an early spark for artificial general intelligence (AGI). We are closer than we think.

Does this shatter your world? I’m scared too.

P.S. - This post wasn’t written by AutoGPT :P

P.P.S. - Thanks to Nick for putting the concept of autonomous agents on my radar.