Parabolica # 10 - ControlNet for additional controls to image2image

Powerful, Open-sourced, just a little clunky

The beauty of open-source technology is that anyone and everyone can contribute to it. A few weeks ago I wrote about the announcement of Gen-1, a closed model by RunwayML. Now there’s an open-source AI called ControlNet that does essentially the same thing that anyone can use right now. Meanwhile, Gen-1 is still in closed beta. I see some people are able to test Gen-1 but I’m not one of the lucky few yet.

So what is ControlNet?

https://github.com/lllyasviel/ControlNet

ControlNet is a neural network structure to control diffusion models by adding extra conditions.

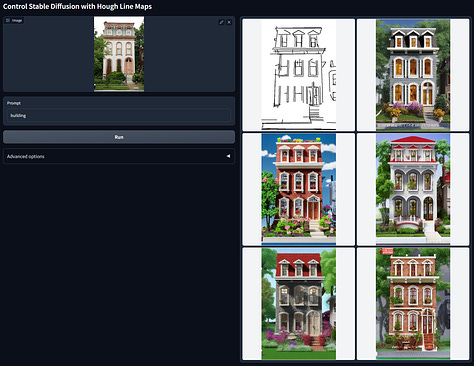

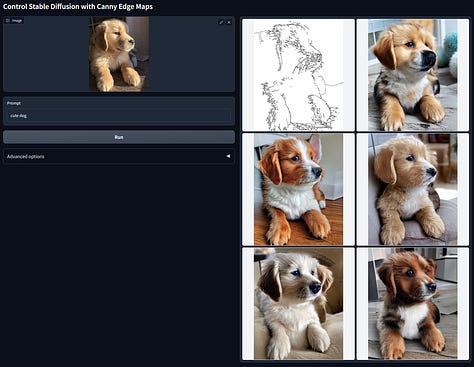

It allows you to apply a style to a stick figure or line drawing. That means additional controls for poses or compositions for text2image. It’s similar to using Deforum’s image init or video input modes. The term used in the AI community is ‘style transfer’. You transfer a style from your prompt into the source video or image.

What can you do with it?

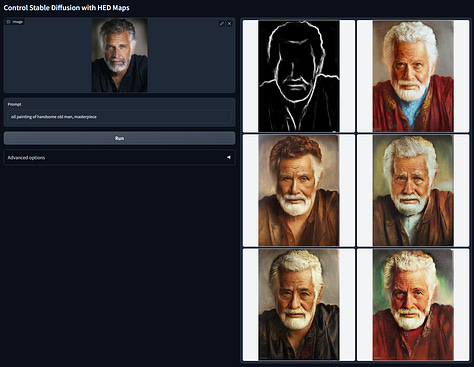

You can generate images and videos with ControlNet. You see a lot of MidJourney product art generated but they don’t have angle consistency. The shots are all from different angles. With ControlNet, we can ‘lock’ the frame. Take a look at the examples below.

Images

Videos

To create videos, the workaround right now is to do a batch image upload for ControlNet to style. The steps go:

Find video

Turn each frame of the video into a folder containing .jpg or .png

Input the source directory into ControlNet

Apply prompt

Download folder of new .jpg or .png

Turn into final video

If you want a comprehensive video guide check Olivio Sarikas’ video out:

Honestly, for videos I would still go with Deforum for animations and video input (style transfer). You can achieve the same effect playing with the strength settings and increasing the frame intervals to diffuse.

Though there is a lot of buzz in the AI community right now about ControlNet. I am excited to see what breakthroughs we see in a few months. People use it in combination with EBSynth to achieve results like the following Star Wars scene:

Better than Gen-1, open-source, and already available? Gen-1 might already be a flop before it is fully released. For a refresher on what Gen-1 is, I covered it in this post.

And guess what, there are two more open-sourced AI video tools out there:

So what does that mean for marketers?

Product shots for eCom, human poses for yoga/fashion, interior shots for interior designers, card designs for FinTech, the list goes on.

For videos, this means applying different styles to your source video. Styles like:

inkpunk

cyberpunk

line art

by x artist

Studio Ghibli, Pixar, Disney

different face altogether

If you already have a concept that works for you, iterate on it by applying a new style for some low-hanging fruit variations. (e.g. this video of an egg cracking is a proven hook for us, will applying these styles improve conversion rate?

Or you can apply a working style prompt to other video or image sources (e.g. stop-motion works well in your ads, so apply that style to multiple source videos or images for testing).

Do any ideas come to your mind?